MFT Resiliency Options:

File Transfer High Availability and Disaster Recovery

As your managed file transfer (MFT) solution becomes more and more critical to your business, it’s important to consider file transfer resiliency, including MFT high availability (HA) options (high availability). High availability refers to a system that is continuously operational, with availability measured against “100% operational” or “never failing”.

There are different ways of achieving MFT HA, depending on your chosen solution but before you think about adding resiliency there are a few things you will need to consider.

MFT resiliency options: MFT HA or DR?

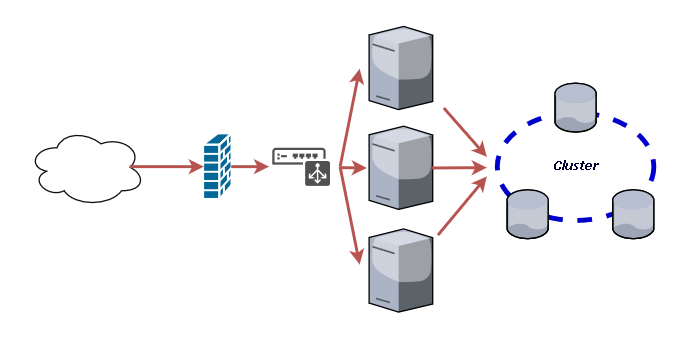

Highly available (HA) managed file transfer systems typically rely on two or more nodes, which can each handle requests at the same time. When a single node fails, the other nodes carry on and can pick up the extra load from the failed node. In many cases MFT systems tend to use either a native clustering technology such as Windows Server Clustering or will have proprietary heartbeats which keep nodes aware of each other. When one node fails, only connections and transfers that are actively passing through that node are lost, while other transfers passing through other nodes are unaffected and keep being processed. The goal for a HA system is nearly zero downtime in the event of a failure.

Disaster Recovery (DR) systems are designed to provide resilience in the event of a more significant failure of the Managed File Transfer system. DR systems are typically based in a different location to the standard system and only accept connections and transfers when the main system is unavailable. Network routing, storage and database replication and other infrastructure changes may be needed to be completed before the DR system can be activated and this can lead to a period of service downtime while this takes place.

Active:Active or Active:Passive

When it comes to designing a HA system, most MFT solutions offer either an Active:Active or an Active:Passive configuration.

In an Active:Active configuration, two or more nodes are running and sharing resources such as storage and database links.

With Active:Passive HA configurations, all the load is passed through a single node and, when that fails, another node in the system will detect it and start all the services required to run the system. This will mean that all connections are lost in the event of failure but typically, service is resumed very quickly in the event of failure.

Stretch HA

Some solutions are now able to offer a different MFT HA set-up. This is a hybrid HA/DR type configuration, where nodes can be sited in different data centres and able to accept connections independently of each other. They will typically write to storage which is either replicated in near real-time or will deal with synchronising data between nodes independently of any infrastructure. This is becoming more popular as it reduces the footprint of the overall solution while providing the MFT resiliency of HA and DR architectures. The downside to this is that not every MFT solution can support this architecture and that the network infrastructure required is more complicated.

Considerations

Before deciding on a resilient architecture you need to consider some or all of the following factors:

1. Infrastructure

HA and DR solutions need more architecture and are significantly more complicated to configure and manage. Additional servers, management interfaces and network resources all need to be factored in and available.

Further Reading:

2. Networking

Global and Local load balancers are needed to route the traffic to the correct nodes. These are usually configured in either a “round robin” type of configuration where connections are passed to each node in turn, or for some more advanced load balancers connections are tracked and passed to the least busy node. In addition, networking routes and firewalls need to be configured for all nodes including passive or DR nodes. Specifically for DR, trading partners and end users may need to be able to send to alternative nodes.

3. Storage Replication

Typically HA solutions will share storage but for DR, solutions may need storage replication between data centres. If this is only happening on a schedule, then data may not be available when the DR system comes up, or in some cases, the system may not be able to come up until the data is replicated.

4. Database Clustering

In a similar way to data replication, databases for HA systems are typically run on replicated technology. If the same database is to be used between the data centres, synchronous database clustering would need to be used (rather than asynchronous approaches like mirroring or log shipping). This should be near instantaneous to avoid similar issues to Storage replication.

5. Proxies

Forward and reverse proxies can be very helpful to shield any node failures from incoming connections, as they typically “front” the systems and should remain unaffected by any node failure. This can be a big advantage for using these gateways but care needs to be taken if in a DR configuration that these must be failed over too in the event of a server failure.

6. Monitoring

Monitoring tools should be in place checking a variety of factors such as services running, and node statuses. If these detect a failure, alerts should trigger and if possible automated failover scripts should be executed to bring up any additional nodes or resources.

Using virtualisation tool to achieve DR

In order to simplify the whole HA/DR architecture, many organisations now leverage virtualisation tools such as VMware Site Recovery Manager (SRM) or vSphere vMotion. These can handle the moving of the virtual server instance to another virtual environment with all associated resources.

If servers are physical, some solutions offer DR tools that can automatically trigger remote instances of the services using simple monitoring.

In mission critical B2B workflows achieving maximum uptime is of paramount importance. The type of resiliency your business requires may even determine your choice of solution, however this may also be driven by infrastructure limitations or simply the way in which the vendor charges for the additional nodes required to deliver.

Further Reading:

Do you need help? Pro2col’s expert consultancy will save you time and stress

What is MFT and how does it enhance security, productivity, compliance and visibility? This free guide is compiled from 16+ years’ experience. It includes definition, product features & use cases.